I’ve been meaning to write this up for so almost a year now. Time really does fly when you procrastinate. I do want to start by apologising for the lack of images, however due to the sensitive nature of this, I’ve decided it best to remove many of the evidential images I took.

This originally goes all the way back to May of 2022, where one evening I had nothing to do and needed something to keep me busy. At the time I was looking for a new job, so I thought a great use of my team would be doing some security related work. After looking through some news reports and posts by prominent members I decided to focus on an issue that “should” have been resolved for quite some time, that being unauthenticated public facing Elastic Search servers.

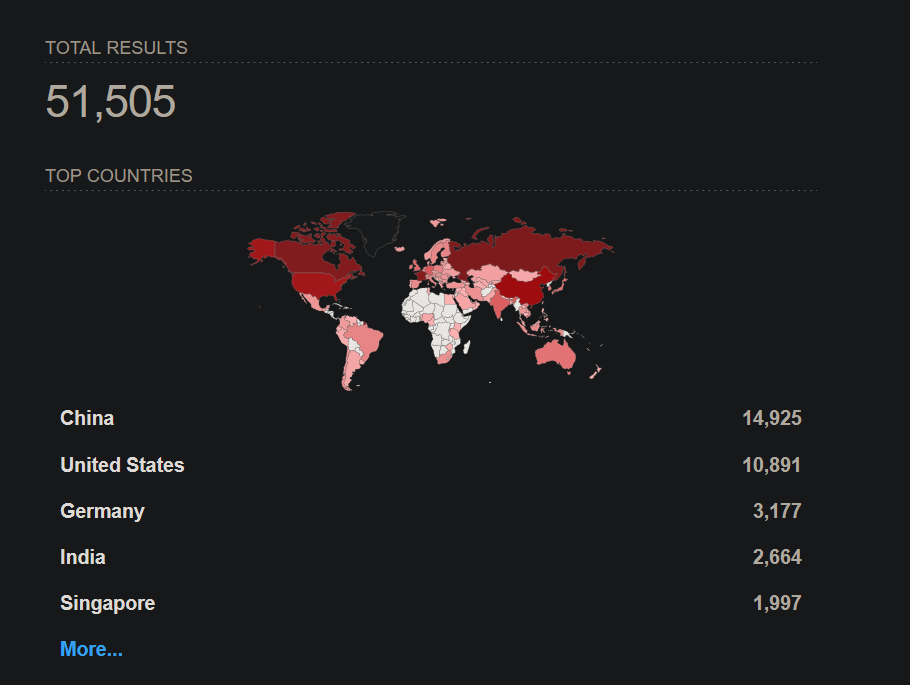

So I decided to load up Shodan and see what I could find, and oh boy, did I find some stuff….

Patch, what patch?

The first thing I saw after loading Shodan was 10’s of thousands of servers, which immediately made me think this was going to be quite a difficult task and perhaps I’d bitten off more than I could chew. But hey, I’ve started this now, might as well have a dig around and see what I can find. So I open up the first page and start clicking through IP addresses…

I didn’t even get to the end of the page before I came across a server leaking data from an un-authenticated port. Now, I should clarify here that many of these servers appeared to only have default setup data or contained limit log information (as reported by Shodan).

So I spent a few hours sifting through a few pages of results looking for any hosts that contained sensitive information and tried to reach out and report it wherever possible. On my first evening of searching I found and reported the following;

- A Doctors surgery hosting client data

- The owner stated this was test data

- A surveillance company working for governments

- Never received a response, but nothing indicated that this data was highly sensitive.

- Auto shop offering vehicle repairs

- 3 other unknown servers with a combined 37GB of data

- Reports we’re made to the server providers, most never responded or refused to assist

Clearly, this was a much bigger problem than I originally thought. In just a few hours I’d managed to find significant services hosting Gigabytes of data publicly on the web from all types of industries.

This warranted further investigation.

Deep Dive

So now that I know that this isn’t a small issue, can I find any significant sets of data being publicly exposed? Spoiler alert: Yes, yes I can.

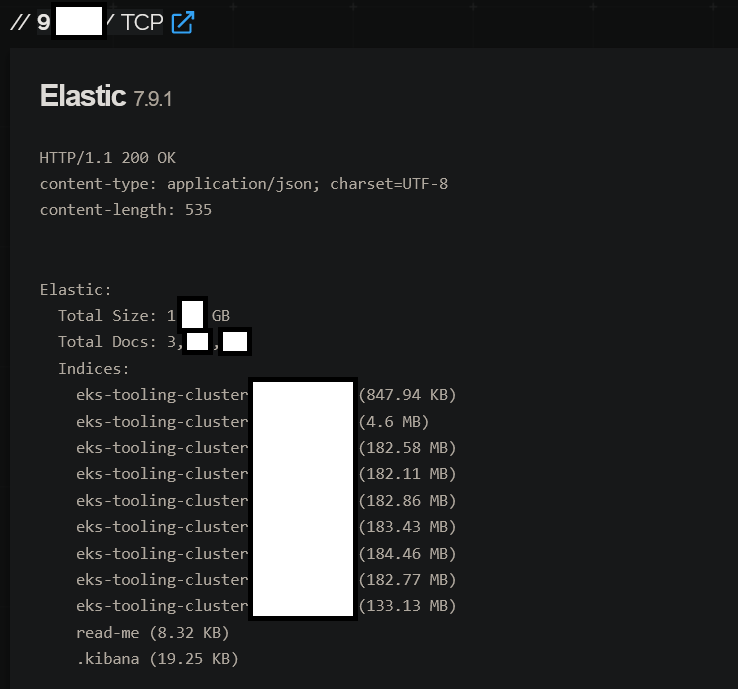

It didn’t take long, just 24 hours in fact, for me to come across a server leaking 6TB+ of data. Upon finding this I was shocked, surely this was just an error? or perhaps the data was just non-sensitive logs like many previous servers? No, this server contained very sensitive data. Data on the server included live updating outlook mail (not logs, the whole email), system logs, Network data, barracuda security logs (which I found very ironic), apache logs and bunch of other data. At the time of logging this finding Elastic search listed the server as holding 12.3 billion documents.

Billion, with a b. This was truly insane.

My head raced, at first I thought maybe this was intentional? But no, the data on the server was too sensitive for that. Maybe the server was a honeypot? Possibly, but this would be by far the best honeypot I’d ever seen. Ultimately I came to the conclusion, this was real and this was really bad.

The long road of reporting

Now, I have a whole rant on the process of responsible disclosures, but that’s for another day. Today we focus on the process of reporting this critical vulnerability.

So the first thing I did after finding this was to try and identify who the server belonged too. Some servers use handy hostnames, have files that list the owner or are on restricted networks. In this case, we ticked all 3 boxes. Great I thought, this data gives me plenty to work with. So, the first thing I did was check the hostname to see where it would take me. Navigating to their website I quickly came to the realization that I wasn’t dealing with some regular business with poor security practices; this was in-fact a sector of a foreign government. Things just went from bad to worse.

Okay I thought, it’s a government website, there will be plenty of emails dotted around for me to contact. Nope. I searched to see if they had a security.txt or anything that pointed to an IT based email address at the company, nothing found. I managed to grab a Contact@ email address and that was about it, I couldn’t find anything else online at the time.

Being that this was a critical issue, I reached out to the Contact@ email immediately and also reached out to the website hosting provider and asked for a security contact at that IP address to contact me via email. Emails were sent out in both english and a translation into their local language.

2 weeks go by, nothing.

Okay, no response in 2 weeks, maybe they’ve fixed the exploit and just not told me? I go check and nope, still active, the amount of data has increased. Shit. I start doubting myself at this point, maybe I should of pestered them a bit more? At this point, I couldn’t do anything other than to try contact them again. So that’s what I did. I reached out to the company as best I could, using the emails on their website, checking users on Linkedin, using any method possible to put me in contact with their head of security.

3 more days, radio silence.

At this point I’m getting quite annoyed. This data set is a vast trove of data, likely containing highly sensitive documentation, but alas, no one is listening. I’m screaming into the void.

So I start thinking again, what other ways could I reach out and get this problem solved? So I hop on Twitter and wouldn’t you know it, they have an account. I drop an email to their Twitter account, provide vague details of my finding and state the email address where I’ve contacted them in the past and ask them to reach out to me immediately.

4 more days go by, at this point the void is deafening.

So once again I reach out to their Contact@ email address, a bunch of random users at the company and ask them to point me to their head of security/IT. I even went as far as to reach out to countries Computer Emergency Response Team (CERT) email, as at this point this was a threat to the country. I’m sure you can see where this is going…

2 Weeks Later

At this point, the constant reporting and work in general were getting to me, so I decided to step back for a few days to stop myself burning out. I opened back up my mailbox and wouldn’t you have guessed it, nothing. At this point we’re a month down the line since my original report and I’ve had no response, not even from the countries CERT. Up until this point I’ve been quite reserved with how much data i’ve sent, as I don’t want to provide any data to the wrong person, even with the CERT. This time I decide to provide evidence, heavily redacted to prove that Terabytes of their data are available on the web. Well It’s safe to say, this was a wake up call.

In less than 24 hours the CERT had reviewed my email, responded to me and put me through to the head of information security for this department.

Resolution

Finally, we’ve got a response. It seems my constant nagging and providing a small snippet of evidence to the CERT has started a fire under someone’s butt. From here, nothing too out of the ordinary happens;

- I received an email from the Head of Information Security for the department who was very keen to know the details of the vulnerability, how to access it and whether the data was still accessible.

- Provided evidence to confirm the vulnerability was still active and how they can replicate this.

- Received confirmation that the vulnerability exists and they were investigating

- Proceeded to receive no response for another 10 days, chased them

- Received a response stating that it took them a few days to investigate this

- Blamed a third party

- Surprisingly, provided a full breakdown of the review they took internally and fixes put in-place, notable points from this were;

- Firewall review

- Setup a security breach reporting email

- Review processes

- Stated they were investigating whether exfiltration of the data had taken place.

Overall, this was still a bit of a messy resolution, but a resolution was reached and an internal review was apparently done, probably the best I could hope for. That being said, a number of areas of improvement that they stated they would put in-place don’t ever appear to have been done.

Lessons Learned

So what did we actually learn today?

Well first off, we learned that ElasticSearch is still a complete shit show, where servers off all types are just left idly online for anyone to view the data (or ransom them, but that’s whole other tangent…). We’ve also learned that in many cases responsibly disclosure of vulnerabilities is a massive pain in the ass, you will spend huge quantities of time trying to find a contact within a business who inevitably won’t respond to you. Finally we’ve learned that businesses (of all types) still don’t take security seriously, many high profile businesses/websites fail to follow the well-known.txt format that’s being implimented by many companies, nor do they provide any valuable way to contact the relevant parties.

So my takeaway from this is that their needs to be major changes in industry. Companies need to have more stringent base security requirements and those that handle any form of sensitive data should be required to hold these controls. Obviously, this isn’t something that’s possible globally, but a push to enforce this within the EU, UK and US could pave the way to a more secure global environment.

Without this change, I fear that we’re just going to see larger and more dangerous data breaches that will inevitably lead to serious real world damage to individuals, not just businesses bottom lines.